Part 4: DBAs guide to managing sandboxes

This is the next part in my on-going series of posts on the topic of how to successfully manage sandboxes within an Oracle data warehouse environment. In Part 1 I provided an overview of sandboxing (key characteristics, deployment models) and introduced the concept of a lifecycle called BOX’D (Build, Observer, X-Charge and Drop). In Part 2 I briefly explored the key differences between data marts and sandboxes. Part 3 explored the Build-phase of our lifecycle.

Now, in this post I am going to focus on the Observe-phase. At this stage in the lifecycle we are concerned with managing our sandboxes. Most modern data warehouse environments will be running hundreds of data discovery projects so it is vital that the DBA can monitor and control the resources that each sandbox consumes by establishing rules to control the resources available to each project both in general terms and specifically for each project.

In most cases, DBAs will setup a sandbox with dedicated resources. However, this approach does not create an efficient use of resources since sharing of unused resources across other projects is just not possible. The key advantage of Oracle Multitenant is its unique approach to resource management. The only realistic way to support thousands of sandboxes, which in today’s analytical driven environments is entirely possible if not inevitable, is to allocate one chunk of memory and one set of background processes for each container database. This provides much greater utilisation of existing IT resources and greater scalability as multiple pluggable sandboxes are consolidated into the multitenant container database.

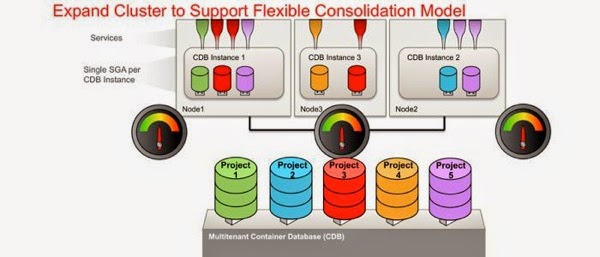

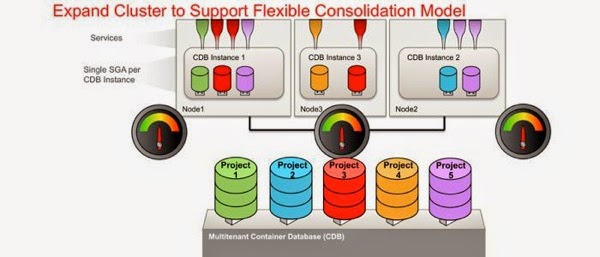

Using multitenant we can now expand and reduce our resources as required to match our workloads. In the example below we are running an Oracle RAC environment, with two nodes in the cluster. You can see that only certain PDBs are open on certain nodes of the cluster and this is achieved by opening the corresponding services on these nodes as appropriate. In this way we are partitioning the SGA across the various nodes of the RAC cluster. This allows us to achieve the scalability we need for managing lots of sandboxes. At this stage we have a lot of project teams running large, sophisticated workloads which is causing the system to run close to capacity as represented by the little resource meters.

It would be great if our DBA could add some additional processing power to this environment to handle this increased workload. With 12c what we can do is simply drop another node into the cluster which allows us to spread the processing of the various sandbox workloads loads out across the expanded cluster.

Now our little resource meters are showing that the load on the system is a lot more comfortable. This shows that the new multitenant feature integrates really well with RAC. It’s a symbiotic relationship whereby Multitenant makes RAC better and RAC makes Multitenant better.

So now we can add resources to the cluster how do we actually manage resources across each of our sandboxes? As a DBA I am sure that you are familiar with the features in Resource Manager that allow you to control system resources: CPU, sessions, parallel execution servers, Exadata I/O. If you need a quick refresher on Resource Manager then check out this presentation by Dan Norris “Overview of Oracle Resource Manager on Exadata” and the chapter on resource management in the 12c DBA guide.

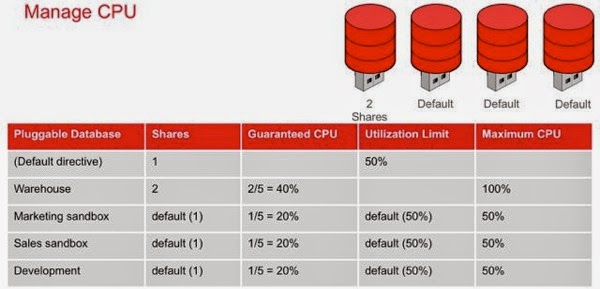

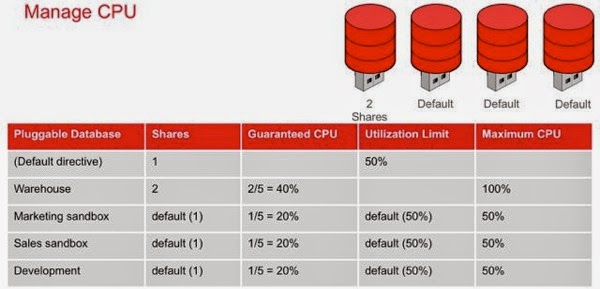

With 12c Resource Manager is now multitenant-aware. Using Resource Manager we can configure policies to control how system resources are shared across the sandboxes/projects. Policies control how resources are utilised across PDBs creating hard limits that can enforce a “get what you pay for” model which is an important point when we move forward to the next phase of the lifecycle: X-Charge. Within Resource Manager we have adopted an “industry standard” approach to controlling resources based on two notions:

As you can see, there are four total shares, 2 for the data warehouse and one each for our two sandboxes. This means that our data warehouse is guaranteed 50% of the CPU whatever else is going on in the other sandboxes (PDBs). Similarly each of our sandbox projects is guaranteed at least 25%. However, in this case we did not specify settings for maximum utilisation. Therefore, our marketing sandbox could use 100% of the CPU if both the data warehouse and the sales sandbox were idle.

By using the “Default” profile we can simplify the whole process of adding and removing sandboxes/PDBS. As we add and remove sandboxes, the system resources are correctly rebalanced, by using the settings specific default profile, across all the plugged-in sandboxes/PDBs as shown below.

Summary

In this latest post on sandboxing I have examined the “Observe” phase of our BOX’D sandbox lifecycle. With the new multitenant-aware Resource Manager we can configure policies to control how system resources are shared across sandboxes. Using Resource Manager it is possible to configure a policy so that the first tenant in a large, powerful server experiences a realistic share of the resources that will eventually be shared as other tenants are plugged in.

In the next post I will explore the next phase of our sandbox lifecycle, X-charge, which will cover the metering and chargeback services for pluggable sandboxes.

Technorati Tags: Data Warehousing, Database 12c, Sandbox

Now, in this post I am going to focus on the Observe-phase. At this stage in the lifecycle we are concerned with managing our sandboxes. Most modern data warehouse environments will be running hundreds of data discovery projects so it is vital that the DBA can monitor and control the resources that each sandbox consumes by establishing rules to control the resources available to each project both in general terms and specifically for each project.

In most cases, DBAs will setup a sandbox with dedicated resources. However, this approach does not create an efficient use of resources since sharing of unused resources across other projects is just not possible. The key advantage of Oracle Multitenant is its unique approach to resource management. The only realistic way to support thousands of sandboxes, which in today’s analytical driven environments is entirely possible if not inevitable, is to allocate one chunk of memory and one set of background processes for each container database. This provides much greater utilisation of existing IT resources and greater scalability as multiple pluggable sandboxes are consolidated into the multitenant container database.

Using multitenant we can now expand and reduce our resources as required to match our workloads. In the example below we are running an Oracle RAC environment, with two nodes in the cluster. You can see that only certain PDBs are open on certain nodes of the cluster and this is achieved by opening the corresponding services on these nodes as appropriate. In this way we are partitioning the SGA across the various nodes of the RAC cluster. This allows us to achieve the scalability we need for managing lots of sandboxes. At this stage we have a lot of project teams running large, sophisticated workloads which is causing the system to run close to capacity as represented by the little resource meters.

It would be great if our DBA could add some additional processing power to this environment to handle this increased workload. With 12c what we can do is simply drop another node into the cluster which allows us to spread the processing of the various sandbox workloads loads out across the expanded cluster.

Now our little resource meters are showing that the load on the system is a lot more comfortable. This shows that the new multitenant feature integrates really well with RAC. It’s a symbiotic relationship whereby Multitenant makes RAC better and RAC makes Multitenant better.

So now we can add resources to the cluster how do we actually manage resources across each of our sandboxes? As a DBA I am sure that you are familiar with the features in Resource Manager that allow you to control system resources: CPU, sessions, parallel execution servers, Exadata I/O. If you need a quick refresher on Resource Manager then check out this presentation by Dan Norris “Overview of Oracle Resource Manager on Exadata” and the chapter on resource management in the 12c DBA guide.

With 12c Resource Manager is now multitenant-aware. Using Resource Manager we can configure policies to control how system resources are shared across the sandboxes/projects. Policies control how resources are utilised across PDBs creating hard limits that can enforce a “get what you pay for” model which is an important point when we move forward to the next phase of the lifecycle: X-Charge. Within Resource Manager we have adopted an “industry standard” approach to controlling resources based on two notions:

- a number of shares is allocated to each PDB

- a maximum utilization limit may be applied to each PDB

As you can see, there are four total shares, 2 for the data warehouse and one each for our two sandboxes. This means that our data warehouse is guaranteed 50% of the CPU whatever else is going on in the other sandboxes (PDBs). Similarly each of our sandbox projects is guaranteed at least 25%. However, in this case we did not specify settings for maximum utilisation. Therefore, our marketing sandbox could use 100% of the CPU if both the data warehouse and the sales sandbox were idle.

By using the “Default” profile we can simplify the whole process of adding and removing sandboxes/PDBS. As we add and remove sandboxes, the system resources are correctly rebalanced, by using the settings specific default profile, across all the plugged-in sandboxes/PDBs as shown below.

Summary

In this latest post on sandboxing I have examined the “Observe” phase of our BOX’D sandbox lifecycle. With the new multitenant-aware Resource Manager we can configure policies to control how system resources are shared across sandboxes. Using Resource Manager it is possible to configure a policy so that the first tenant in a large, powerful server experiences a realistic share of the resources that will eventually be shared as other tenants are plugged in.

In the next post I will explore the next phase of our sandbox lifecycle, X-charge, which will cover the metering and chargeback services for pluggable sandboxes.

Technorati Tags: Data Warehousing, Database 12c, Sandbox

Comments

Post a Comment