Part 3: DBAs guide to building and deploying sandboxes

Recently I started a series of blog posts covering the need for a private, secure and safe area for data discovery within the data warehouse ecosystem. The most common name for this type area is a “sandbox”. The demand for sandboxing is growing rapidly as many companies start exploring the new types of data streams linked to “big data”. In the first of my series of blog posts I started with an overview of the basic elements of sandboxes in the cloud. In the second post I looked at the differences between sandboxes vs. data marts. As part of the first posts I put forward the idea of a sandbox lifecycle model, as shown below, and in this post I am going to explore the first stage of that lifecycle model: the BUILD stage:

Many companies are actively working on lots of different big data led projects: customer sentiment analysis, clickstream analysis, product/service recommendations, real time event monitoring etc. Consequently there are lots of different project teams wanting to evaluate a wide variety of new data sources inside their own private space. They are looking for new customers or segments to target, new product opportunities, new ways to retain customers and ways to predict system faults before they happen. Consequently, DBAs are being flooded with requests to create new sandbox environments. The process of building, or deploying, a sandbox can be based on one of two approaches:

Once the new sandbox is ready the business users/data scientists can then start loading data. I am not going to over the data loading process, however, please note that this can be done using a data integration tool such as Oracle Data Integrator or flat files. In fact ODI now has a scripting language called Groovy which allows ETL developers to create easy-to-use dialog for selecting a table/view from a source system and copy its data to a target schema. For more information about ODI’s Groovy scripting language checkout the posts on the data integration blog: https://blogs.oracle.com/warehousebuilder/tags/groovy.

Overall, creating empty sandboxes using DBCA is a simple process. However, this approach does mean that data is replicated from the original database and each instance requires its own dedicated hardware resources (I/O, CPU and memory) which cannot be easily transferred to other sandboxes. Every time a new sandbox is required the DBA and systems administrators have to ensure that sufficient memory for managing the data along with all the typical database background processes, storage and CPU are available. As more and more sandboxes are requested and deployed these replicated overheads quickly consume the available server resources and this limits the number of sandboxes that can be run on a specific platform. Having dedicated, non-sharable, resources is extremely limiting and very costly.

Many teams find that trying to get approval to create a new database is a long and painful process. Sometimes it can months to work through the bureaucracy and ensure all the pieces are in place ready to create the new database: storage, processing nodes, network connectivity etc.

Users in a workspace always see a transactionally consistent view of the entire database; that is, they see changes made in their current workspace plus the original data in the database as it existed either when the workspace was created or when the workspace was last refreshed with changes from the parent workspace. Workspace Manager even supports a hierarchy of workspaces for very complex sandbox requirements involving multiple teams and/or project goals.

There is a great example of how to use Workspace Manager for what-if analysis on the Oracle Learning Library. The set-by-step tutorial shows you how to use workspaces to do location-based analysis on current and proposed data. The fictitious company MyCompany is planning to build a new warehouse to provide better service to its customers. Two potential sites are under consideration. The company wants to use SQL to analyse the prospective sites. To do this, data for both prospective sites must be entered into the production warehouse schema. However, this new data must be kept separate from the existing production data so that it does not impact the work of employees who are not part of the site selection team. Using Workspace Manager it is possible to isolate data for each of prospective sites and allow the two site selection teams to work concurrently. This use case creates the following hierarchy of workspaces, as shown below:

You can take this sandbox tutorial by following the steps on the Oracle Learning Library page, see here: https://apex.oracle.com/pls/apex/f?p=44785:24:15780601154836::NO:24:P24_CONTENT_ID%2CP24_PREV_PAGE:4553%2C29.

One of the challenges of using workspaces relates to resource management and the ability to increase or decrease resources which is important for overall resource utilisation.

Obviously at some point users will want to add new data into the workspace and as with the DBCA overview, ETL developers can use ODI’s scripting language called Groovy to create easy-to-use dialog for selecting a table/view from a source system and copy its data to a target schema. For more information about ODI’s Groovy scripting language checkout the posts on the data integration blog: https://blogs.oracle.com/warehousebuilder/tags/groovy.

This new feature (Oracle Multitenant) delivers a new architecture that provides a multitenant container database to hold a series of pluggable databases, or in this case sandboxes. There is a great quick overview of the new multitenant feature available on YouTube, follow the link below to watch the video:

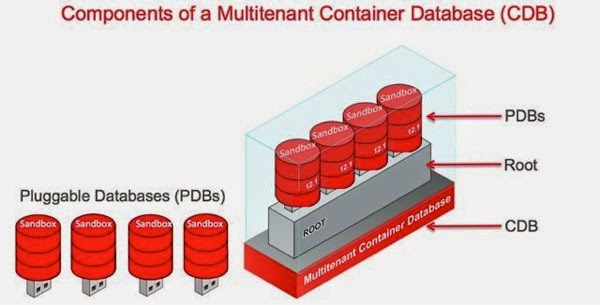

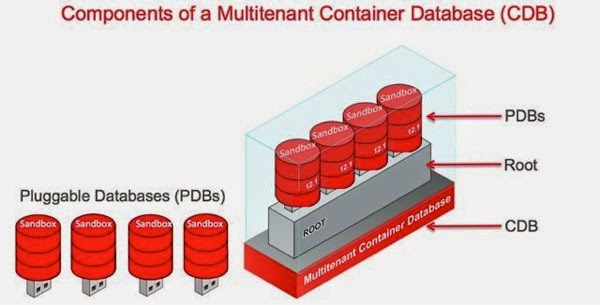

With the new multitenant architecture featuring pluggable databases (sandboxes) it is now possible to create a single container database that allows multiple sandboxes to be simply plugged in. Below is a simple overview of the main multitenant terminology. When we start to create a new multitenant sandbox environment we plug-in our multiple pluggable sandboxes to the root database. The combination of root database and pluggable sandboxes (PDBs) is referred to as a “Container Database” or CDB as shown below:

The great thing about the multitenant feature us that it offers a very fast and efficient way of creating new sandboxes. It is a bit like the template feature in Database Configuration Assistant with one important difference: deploying a new pluggable sandbox takes seconds as shown here:

the above is taken from the Mutitenant info graphic which is here: http://www.oracle.com/us/products/database/oracle-multitenant-infographic-1961308.pdf. The actual speed at which we can deploy pluggable sandboxes is outlined in the graph below:

The first bar on the graph shows the time taken to create a “standard” database, which involves coping template data files and then configuring the instance which can take a considerable amount of time. The second bar shows the time taken to create a new pluggable sandbox, which is significantly faster. The third bar shows that we can deliver pluggable sandboxes in real time by using the built-in “clone” feature - this allows us to deploy a new pluggable sandbox which is able to “see” the source data in the PDB which acted as the source for the clone. The cloned-pluggable sandbox also gets access to new/refreshed data as it is changed in the source system. This means that our cloned pluggable sandbox is always up to date with the very latest data.

Now we understand the overall architecture and speed at which we can deploy new sandboxes, how do you actually request a new sandbox/PDB?

Step 1: Start the APEX self-service provisioning app and click on the “New Database” link:

Step 2: Select to create a new sandbox/pluggable database or plug-in an existing database that has been moved from another container. In the majority of cases business users will typically provision a new sandbox:

Step 3: Determine if you are going to create a new empty sandbox or clone an existing database (mart or warehouse or existing sandbox). In the example below we have selected and existing sandbox MIKETEST and the option to clone this database is available at the bottom of the screen.

Step 4: Check the details and confirm the deployment.

As mentioned earlier the speed at which pluggable sandboxes can be created means that business users can request a new pluggable sandbox and have the environment ready for use in a matter of seconds (of your course your mileage might vary according to your hardware!).

From within SQLDeveloper you can: deploy a sandbox (CREATE PLUGGABLE or CLONE PLUGGABLE), move a sandbox (UNPLUG and PLUG) and delete a sandbox (DROP PLUGGABLE)

SQL Developer offers fine grained control and most business users and data scientists will probably need some guidance from their DBA on the various parameter settings for deploying a new sandbox. For more information about using SQL Developer to manage ( creating, modifying, plugging/unplugging) pluggable sandboxes see the online tutorial on the Oracle Learning Library: https://apex.oracle.com/pls/apex/f?p=44785:24:113456037406764:::24:P24_CONTENT_ID%2CP24_PROD_SECTION_GRP_ID%2CP24_PREV_PAGE:7649%2C%2C24

|

- Deliver an empty environment: Project team then finds the required data sources and loads them into the sandbox before starting their analysis

- Delivered a pre-populated environment: Project team loads any additional new data sources into the sandbox before starting their analysis

Building Sandboxes pre-Database 12c

There are a number of ways to create sandboxes using pre-12c versions of the Oracle Database. The two most popular choices that I have seen used are Database Configuration Assistant and Workspace Manager.Database Configuration Assistant

Creating an empty sandbox is a relatively easy for DBAs and business users. The Database Configuration Assistant (DBCA) provides a wizard for creating and configuring an empty, but ready to go, database. This tool is very flexible and allows DBAs to control all aspects of the database creation process. For most business users the wizard provides far too many parameters to configure so Oracle provides three default configuration templates which provide default values for all the main configuration parameters. The pre-built templates include: general purpose, data warehouse and custom. The DBA can add additional templates for specific types of sandboxes by simply creating new template files and this is covered in the database documentation (see here: http://docs.oracle.com/cd/E16655_01/server.121/e17643/install.htm#BABEGFCG). The information in each template includes details of database options, initialisation parameters, and storage attributes (for data files, tablespaces, control files, and online redo logs). A common approach might be to create templates to configure large, medium and small sandboxes with appropriate settings for the specific hardware platform being used.Once the new sandbox is ready the business users/data scientists can then start loading data. I am not going to over the data loading process, however, please note that this can be done using a data integration tool such as Oracle Data Integrator or flat files. In fact ODI now has a scripting language called Groovy which allows ETL developers to create easy-to-use dialog for selecting a table/view from a source system and copy its data to a target schema. For more information about ODI’s Groovy scripting language checkout the posts on the data integration blog: https://blogs.oracle.com/warehousebuilder/tags/groovy.

Overall, creating empty sandboxes using DBCA is a simple process. However, this approach does mean that data is replicated from the original database and each instance requires its own dedicated hardware resources (I/O, CPU and memory) which cannot be easily transferred to other sandboxes. Every time a new sandbox is required the DBA and systems administrators have to ensure that sufficient memory for managing the data along with all the typical database background processes, storage and CPU are available. As more and more sandboxes are requested and deployed these replicated overheads quickly consume the available server resources and this limits the number of sandboxes that can be run on a specific platform. Having dedicated, non-sharable, resources is extremely limiting and very costly.

Many teams find that trying to get approval to create a new database is a long and painful process. Sometimes it can months to work through the bureaucracy and ensure all the pieces are in place ready to create the new database: storage, processing nodes, network connectivity etc.

Key Benefits: relatively simple process for creating new, empty, databases;

Key issues: requires dedicated system resources; cannot copy an existing database with its schemas and data; no simple mechanism for moving discoveries (objects, data etc) to production environment; may take a long time to provision due to internal bureaucracy

Workspace Manager

If projects require pre-populated sandbox environments then creating a sandbox with access to existing data is best done using Oracle Workspace Manager. This provides the ability to manage current, proposed and historical versions of data within the same database instance so there is no need to move data. Workspace Manager creates a self-contained virtual area within a database where users can update existing data without affecting the original data. This virtual area isolates all the changes until they are explicitly merged with production data or discarded. This means there is no need to duplicate existing data and any new data can be loaded in a controlled way without impacting the production data.Users in a workspace always see a transactionally consistent view of the entire database; that is, they see changes made in their current workspace plus the original data in the database as it existed either when the workspace was created or when the workspace was last refreshed with changes from the parent workspace. Workspace Manager even supports a hierarchy of workspaces for very complex sandbox requirements involving multiple teams and/or project goals.

There is a great example of how to use Workspace Manager for what-if analysis on the Oracle Learning Library. The set-by-step tutorial shows you how to use workspaces to do location-based analysis on current and proposed data. The fictitious company MyCompany is planning to build a new warehouse to provide better service to its customers. Two potential sites are under consideration. The company wants to use SQL to analyse the prospective sites. To do this, data for both prospective sites must be entered into the production warehouse schema. However, this new data must be kept separate from the existing production data so that it does not impact the work of employees who are not part of the site selection team. Using Workspace Manager it is possible to isolate data for each of prospective sites and allow the two site selection teams to work concurrently. This use case creates the following hierarchy of workspaces, as shown below:

| version-enabled warehouse table and associated team workspaces: SITE1, SITE2, and LIVE. |

One of the challenges of using workspaces relates to resource management and the ability to increase or decrease resources which is important for overall resource utilisation.

Obviously at some point users will want to add new data into the workspace and as with the DBCA overview, ETL developers can use ODI’s scripting language called Groovy to create easy-to-use dialog for selecting a table/view from a source system and copy its data to a target schema. For more information about ODI’s Groovy scripting language checkout the posts on the data integration blog: https://blogs.oracle.com/warehousebuilder/tags/groovy.

Key Benefits: relatively simple process; no need to copy/clone data; easy to move discoveries to productionFor more information about Oracle Workspace Manager visit the product home page on OTN: http://www.oracle.com/technetwork/database/enterprise-edition/index-087067.html.

Key issues: requires careful workload management; monitoring and chargeback is difficult to achieve

Summary

In general, it is likely that most customers will use both approaches for delivering sandboxes to their project teams. Where there are hundreds of projects taking place at the same time then both approaches will probably create additional work for the DBA and systems administrators in terms of having to monitor and track lots of workspaces and/or plan-monitor-track resource usage across lots of database instances. Ideally what we need is an approach that can easily support the deployment of thousands of sandboxes, offers simple and fast provisioning, leaves data in place to prevent unnecessary movement of large volumes of data, provides a single centrally managed platform and, most importantly, allows for expansion/reduction of resources as needed across all sandboxes. Is this possible?Building Sandboxing with Database 12c

The good news is that the new features we introduced as part of Database 12c combine the all best parts of the sandboxing features from the pre-12c approach with none of the drawbacks. Specifically, Database 12c introduced the concept of a multitenant database. This was originally positioned as a way to consolidate databases and applications under the control of a single environment but the approach works amazingly well for sandboxes. Therefore, with 12c you can now deploy sandboxes into the cloud!This new feature (Oracle Multitenant) delivers a new architecture that provides a multitenant container database to hold a series of pluggable databases, or in this case sandboxes. There is a great quick overview of the new multitenant feature available on YouTube, follow the link below to watch the video:

| Video: Oracle Multitenant Architecture |

The great thing about the multitenant feature us that it offers a very fast and efficient way of creating new sandboxes. It is a bit like the template feature in Database Configuration Assistant with one important difference: deploying a new pluggable sandbox takes seconds as shown here:

the above is taken from the Mutitenant info graphic which is here: http://www.oracle.com/us/products/database/oracle-multitenant-infographic-1961308.pdf. The actual speed at which we can deploy pluggable sandboxes is outlined in the graph below:

The first bar on the graph shows the time taken to create a “standard” database, which involves coping template data files and then configuring the instance which can take a considerable amount of time. The second bar shows the time taken to create a new pluggable sandbox, which is significantly faster. The third bar shows that we can deliver pluggable sandboxes in real time by using the built-in “clone” feature - this allows us to deploy a new pluggable sandbox which is able to “see” the source data in the PDB which acted as the source for the clone. The cloned-pluggable sandbox also gets access to new/refreshed data as it is changed in the source system. This means that our cloned pluggable sandbox is always up to date with the very latest data.

What is clone?

Provisioning a sandbox that is a copy of an existing database so the project teams have access to existing data sets used to be a lengthy multistep process. Multitenant provides a capability called cloning. It is now possible to create local clones (from a pluggable database in the same container database) or remote clones (across a database link to a remote container database). What are the benefits of using this feature? Firstly, it is very simple - a single SQL statement. Secondly, it keeps the overall security requirements nice and simple because all that is required is database access and not OS access. Lastly, business users and data scientists like it because the whole process is so fast. The process of creating full clones is fast. Even faster—ludicrously fast—is the process for creating snapshot clones. Snapshot clones are built on a capability of the underlying file system—where available—called copy-on-write. This makes it possible to request and deploy a pre-populated pluggable sandbox in a matter of seconds!Now we understand the overall architecture and speed at which we can deploy new sandboxes, how do you actually request a new sandbox/PDB?

Self-Service provisioning of sandboxes

In prior releases of the Oracle Database a lot of the tools and features that were used for sandboxing were typically aimed at DBAs. Today, many business users and data scientists want to control and own the process of requesting and building a sandbox. As part of Database 12.1.0.2 we have provided a new self-service application that DBAs can make available to their business teams for self-service provisioning. This new application provides is a simple 4-step process for creating a new pluggable sandbox :Step 1: Start the APEX self-service provisioning app and click on the “New Database” link:

Step 2: Select to create a new sandbox/pluggable database or plug-in an existing database that has been moved from another container. In the majority of cases business users will typically provision a new sandbox:

Step 3: Determine if you are going to create a new empty sandbox or clone an existing database (mart or warehouse or existing sandbox). In the example below we have selected and existing sandbox MIKETEST and the option to clone this database is available at the bottom of the screen.

Step 4: Check the details and confirm the deployment.

As mentioned earlier the speed at which pluggable sandboxes can be created means that business users can request a new pluggable sandbox and have the environment ready for use in a matter of seconds (of your course your mileage might vary according to your hardware!).

Workspace Manager and Multitenant

In many cases the will still be a need to have an environment where the same data sets can be shared across multiple project teams with each team work independently on their own view of the data. This is exactly the sort of use case for Workspace Manager, as described earlier, and the good news is that Workspace Manager functions transparently in a multitenant architecture. Workspaces benefit from the efficient administration of one multitenant container database, and the separation and resource prioritisation allowed by multiple pluggable databases.SQL Developer and Multitenant

For data scientists and more sophisticated business users the latest version of SQL Developer is now multitenant aware. Therefore, rather than using the self-service application, all the required operations for creating and managing a sandbox are now available from within SQL Developer as shown here:

From within SQLDeveloper you can: deploy a sandbox (CREATE PLUGGABLE or CLONE PLUGGABLE), move a sandbox (UNPLUG and PLUG) and delete a sandbox (DROP PLUGGABLE)

SQL Developer offers fine grained control and most business users and data scientists will probably need some guidance from their DBA on the various parameter settings for deploying a new sandbox. For more information about using SQL Developer to manage ( creating, modifying, plugging/unplugging) pluggable sandboxes see the online tutorial on the Oracle Learning Library: https://apex.oracle.com/pls/apex/f?p=44785:24:113456037406764:::24:P24_CONTENT_ID%2CP24_PROD_SECTION_GRP_ID%2CP24_PREV_PAGE:7649%2C%2C24

Comments

Post a Comment